Awesome: SoftRAID with RAID-5 rebuild

I’ll be reporting on SoftRAID version 5 in some detail. With the combination of RAID-5 and the OWC Thunderbay, software RAID has finally come of age—as in a 'killer' solution with many advantages over hardware RAID (ditto for RAID 1+0 and RAID-1).

Fault tolerance in the context of storage means that when a drive fails, you keep working and keep your data. You replace the failed drive, the solution rebuilds things onto the replacement and nothing has been lost. You can even keep working the entire time (at least with SoftRAID). Fault tolerance is critical to workflows where going down even for a few hours or a day is a big problem, but be clear that fault tolerance is not a backup.

SoftRAID 5 fault tolerance via RAID-5

Really cool demo of fault tolerance—

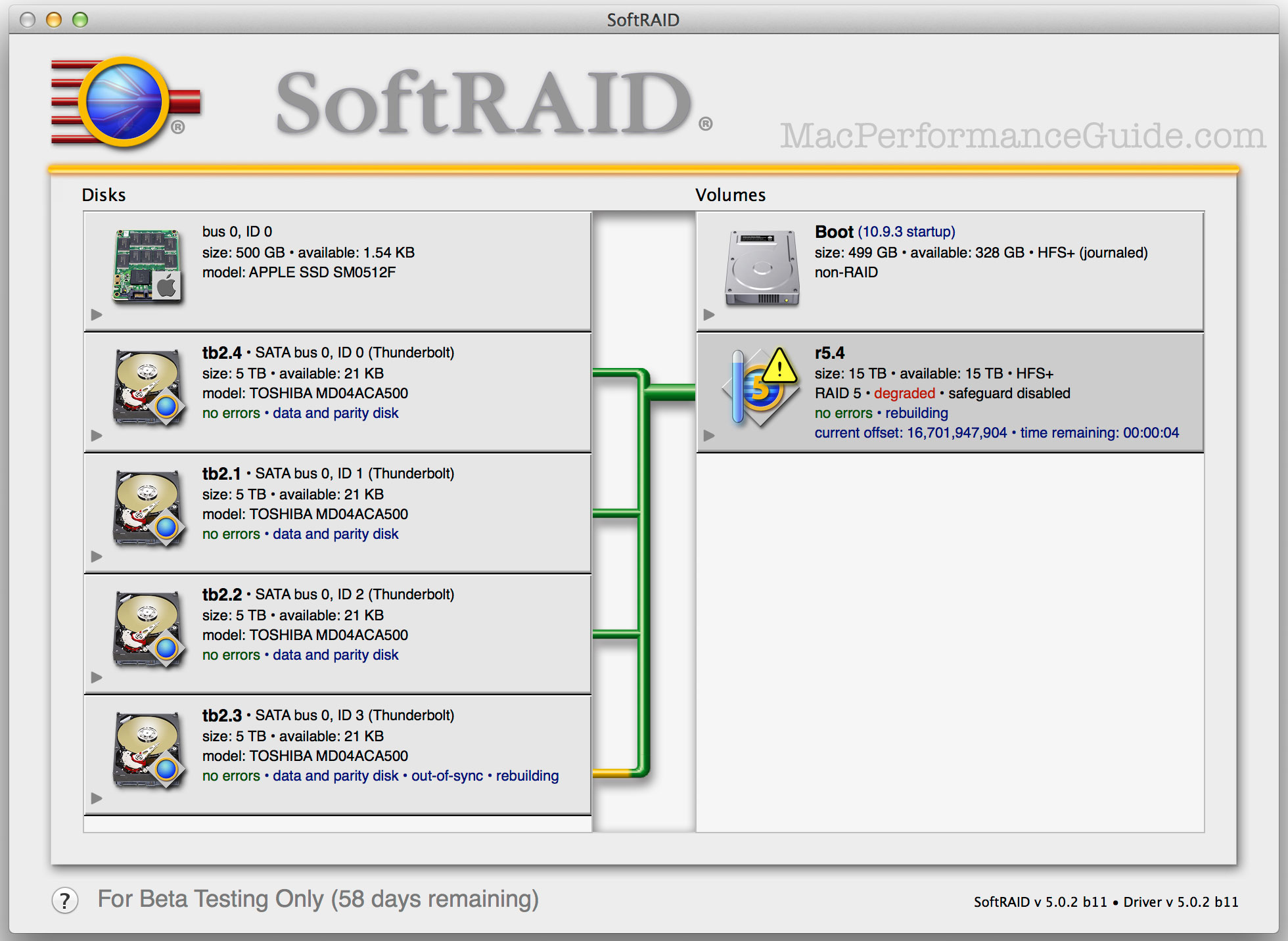

- I took the front cover off the OWC Thunderbay, a 20TB unit (four 5TB drives), which I had set up as a RAID-5 (fault tolerant to one drive failure).

- I yanked out one drive (hot unplug). SR5 promptly advised me that a drive had gone missing.

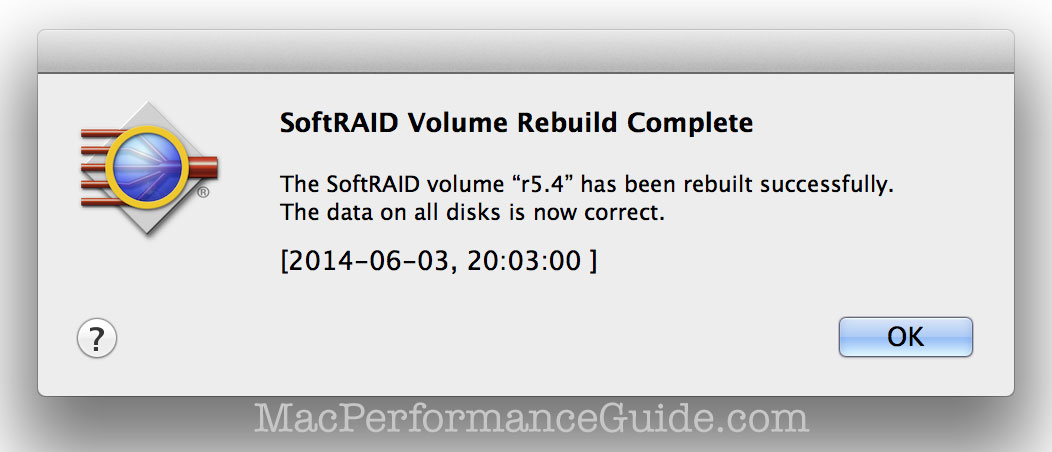

- I then reinserted the drive and in under a minute the RAID-5 had been rebuilt, good as before.

Had the drive failed entirely, the rebuild would of course taken far longer (the entire volume size would have to be updated on a replacement drive). But this level of fail/recover is incredibly cool and not something hardware RAID delivers.

Cold spares

I also tested the fail/replace scenario (using a small volume to speed up the test cycle; a volume can be any size desired). I tested by hot-unplugging a drive and then hot-plugging an uninitialized cold spare. I did this twice. Each time the RAID-5 rebuilt successfully. I had also filled the volume with data and ran IntegrityChecker before, during and after the rebuild—perfect data integrity. SoftRAID also has a Validate command and I ran that also. All this after simulating two failures with two cold spare replacements.

There was one glitch the 2nd time which involved the SoftRAID app not starting the rebuild, claiming the drive was not responding; a reboot cured that and allowed the rebuild to proceed. I am told that this is on the “hot list” of bugs to be fixed before SR5 goes final.

The case of rebuild under intensive write activity had an issue, which developer Tim Standing is keen to fix before final release (most solutions suggest or even preclude using a RAID-5 while a rebuild is in progress, so context must be kept in mind here and please note that this is a beta version, NOT a final release).

Bottom line: this is a very exciting solution. I’ve spent a lot of time testing it and working with the developer, because I think it’s a breakthrough approach with the new world of multi-drive products like the OWC Thunderbay.

Partitioning too

This particular volume was 15TB, but SoftRAID allows any volume size. You could even go crazy using these four drives: a 4TB RAID-5 plus a 5TB RAID-0 stripe plus a RAID-5 or RAID 1+0 of the remainder. That’s not a recommendation (and the sizes are just stated as examples), but it shows just how powerful SoftRAID is.

Two recommended scenario for a 20TB OWC Thunderbay:

- A single 15TB RAID-5 volume, say for storing video. Backup strategies vary here, since 15TB won’t fit onto any single drive solution.

- Three 5TB RAID-5 volumes (say, Master, Archive1, Archive2), for storing photography work in discrete chunks (e.g. 1998 through 2008 on Archive1, 2008 through 2012 on Archive2, current work on Master). To be backed up to 5TB single external drives, thus size-matching the volume to the backup drive (5TB volumes could be clone to 4TB or even 3TB backup drives, so long as the actual data fits).

diglloydTools™

diglloydTools™