30-bit Color Support in OS X El Capitan with Dual NEC Wide Gamut Display + 32-bit Color

First, to clarify 10-bit vs 8-bit color, and what 30-bit color means:

- 10-bit color means 2^10 = 1024 distinct values per color channel (red/green/blue) whereas 8-bit color means 2^8 = 256 bits values per color channel (256 gradations).

- 10-bit color has 4X as many gradations (1024 vs 256). This is a BIG difference: gradients and smooth tonal transitions do not exhibit stepping artifacts (transitions between adjacent color values are invisible to the eye, assuming a high quality display).

- 30-bit color refers to RGB (red/green/blue) having 10-bits for each color channel (24-bit color means 8 bits per color channel). 10 + 10 + 10 = 30 bits.

- If the image is grayscale, then 30-bit color means 10-bits (1024 values) of gray (since the R/G/B values are the same for gray). That’s assuming the display can actually render all 1024 shades, which is unlikely for most displays, particularly for near black. Few displays can render a perfectly neutral grayscale of 256 values, let alone 1024 values; color displays would have to perfectly balance all three R/G/B subpixels to be completely neutral at 1024 brightness levels. Some non-linearity is always there. The best displays will tightly control this performance (within 1 delta-E), and can be considered neutral.

- 32-bit color = 24-bit color + alpha channel. It is not 30/10-bit. See note at end.

Depending on context, one may see “30-bit color” or “10-bit color”. The former refers to total bits for an R/G/B pixel (10 + 10 + 10); the latter refers to the bits per pixel per color channel.

Do not confuse color gamut (the range of colors a display can reproduce) with 30-bit color. A display can accept a 30-bit signal and yet display a far smaller range of colors than those 30 bits describe. Even a wide gamut display has “holes” in its gamut or limits with some colors; the “billions of colors” idea is marketing nonsense derived from 2^30 math. What really matters is just how good a display can reproduce its claimed gamut, and whether it can be truly calibrated (not just faux calibrated) as well as its accuracy (delta-E), consistency over time and temperature, its true gamut at various brightness and contrast levels, etc. So “billions” is marketing-speak. Still it’s a reasonable way of capturing the huge improvement possible with a high-end wide gamut display versus using 24-bit color—huge when grayscale or color gradient come into play, such as the continuous-tone of a sunset or sunrise sky, or the brightness and/or color gradient of a cloud or fog bank or shallow to deep water.

Adobe Photoshop support for 30-bit color

My contact at Adobe responded to my question about 30-bit color support:

Apple added 30-bit support for 10.11. It only works on certain displays and it works better on their 5K displays (even better on the latest generation iMac).

The next update for PS will support 30-bit color on Mac.

I followed up by asking “Why would it not work on any display supporting 10-bit input, e.g. any display that on a PC takes 10-bit?” To which my contact replied:

Apple supports 30-bit output on iMac 5K under 10.11, while other devices get dithering with that option checked.

I’m scratching my head on that one: why would a 10-bit capable display be sent dithered output? I’m hoping to hear more from Adobe to clarify this point.

NEC PA series

Important note for professionals that need precision color: with Apple displays, it’s not clear whether there is any API for true calibration. Nor is it clear that 10-bit can be used for faux calibration of Apple or other brand displays. True calibration as offered by NEC and Eizo is a professional solution.

The NEC PA series displays have long been 10-bit panels with internal 12 or 14-bit true calibration. So I assume they’ll work great, but the Adobe “dithering” comment has me concerned that Apple has degraded things for non-Apple displays.

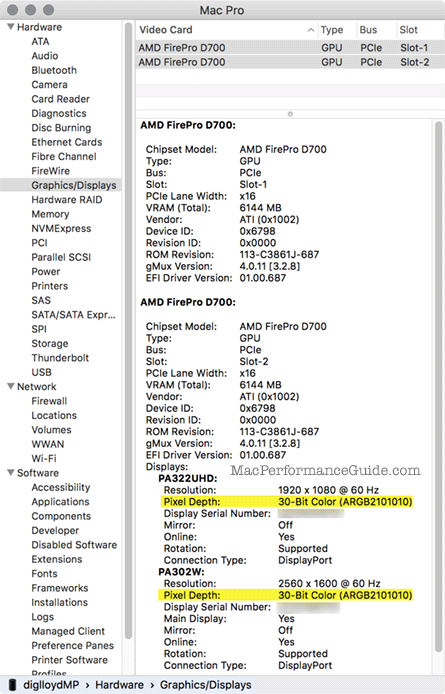

Shown below is a 2013 Mac Pro driving both a 2560 X 1600 NEC PA302W and a 3840 X 2160 NEC PA322UHD in 30-bit color (30-bit = 3 X 10-bit, the R/G/B color channels).

Apple bug: one of my reasons for being skeptical of System Profile as a reliable tool for graphics is that a 3840 X 2160 UltraHD display (PA322UHD) is listed as 1920 X 1080. The resolution of the display is 3840 X 2160 and the display scaling factor is 2:1. This is a bug not seen with a native display. For example, a MacBook Pro Retina is listed as “2880 X 1800 Retina” (not 1440 X 900). And so the NEC PA322 UHD ought to be listed as “3840 X 2160 Retina” or similar.

32-bit color

Some Macs may say 32-bit color. 32-bit color means 24-bit color + one 8-bit alpha channel.

It is curious that 30-bit/10-bit color does not support (or at least does not indicate) the availability of an alpha channel for “40-bit color”.

For example, the screen shot at right is from the late 2013 MacBook Pro Retina. It means that this model does not support 10-bit color, either on its internal retina display or on the external NEC EA244UHD 4K UltraHD display (shown erroneously as 1080 X 1920 but is really 2160 X 3840, portrait oriented setup).

David K writes:

My system profiler (nMP, Mavericks, Eizo 303) shows me even 32-Bit color for my 2560 X 1600 Eizo 303W.

MPG: OS X Mavericks 10.9 does not have 30-bit support. 32-bit color means 24-bit color plus support for an alpha channel (24-bit + 8 = 32 bits). For similar 10-bit support, it ought to show 40-bit color. But this sort of confusion-generating presentation is yet another reason System Profile needs some rough edges addressed.

diglloydTools™

diglloydTools™