Are your Backups Valid?

In the “old days”, the protocol for backups included doing a complete restore to verify the backup was good. This mainly related to tape archiving and physical integrity of the media. For some years in college, I was a sysop and did many a tape backup to those huge reels—stuff you’ll find in museums today.

It’s a law of nature that when you need your backup, something will go wrong, or already went wrong. Some of us learn the hard way, sometimes in small ways, and sometimes in devastating ways.

Today, we have software bugs like mice in a grain silo. And malware, plain 'ol errors and mistakes. And forgetting. True bit rot is unusual but Weird Shit happens.

What’s your plain?

If your computer was stolen or got fried tomorrow, what’s your plan for getting all your stuff back with confidence?

- One (1) backup? You’re playing with matches near an open can of gasoline. It won’t be fine.

- Can all files be read without errors? Drives crap-out unexpectedly, usually just when you need them to work.

- What if the backup had an error or was missing things; how would you know?

- The backup might have been good when you made it, but what about a year later? This is why features like verify-after-write are not a bad idea, but of no worth once the backup is made.

- Backup of a backup... I do that all the time. If I can prove that the copy of a copy of a copy is valid, then all the chain of copies is (or at least was) valid. This can save a ton of time.

- Is every bit of every file intact? Which ones have problems? How can you check automatically?

Get Data Integrity Assurance now

Download page for existing customers.

Or keep guessing about whether your backups suck.

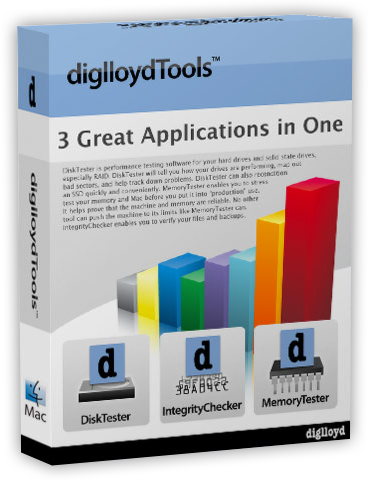

diglloydTools™

diglloydTools™