Memory Fails in Web Server, with Sporadic Problems Making the Cause Mysterious, Until Today

I spent most of my afternoon (4 hours) in a Sunnyvale data center in a 100 decibel environment (shout to be heard). It would have been intolerable and impossible without my Sony WH-1000XM4 noise-canceling headphones.

I was down there thinking I needed an hour or less to fix a few corrupted git pack files. Turns out that there was no file corruption, but the problem was seemingly much worse—bad memory in the server. Symptoms included:

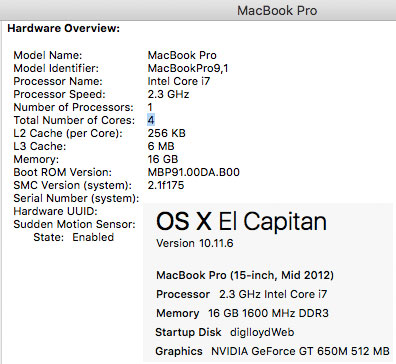

- macOS El Capitan sporadically crashing with a panic twice a week or so.

- Java Virtual Machine (JVM) crashing randomly at startup once a day, an internal memory check failure.

- Source code versioning software 'git' failing 'git gc' and 'git fsck' with corruption/hashing errors, problems appearing and disappearing and changing. And the apparently corrupted index file from last week.

- Random reports of file corruption by IntegrityChecker Java (files randomly fail to validate, then validate, not consistent).

The apparent file corruption at first made me think of a 'git' version mismatch problem causing real data corruption. But I quickly found that even known-good freshly-cloned data was being flagged as corrupted. But which files were flagged as corrupted changed—good, then bad, then good, then bad, and different files.

It finally 'clicked' that bad memory might be the issue.

As it turns out, files were not corrupted. What both 'git' and IntegrityChecker were reporting as bad hashes turned out to be a stored file hash with the correct value not matching a just-computed hash, which was not different because of bad memory causing bit errors that produced a different hash.

failed after ~9 years of use including ~4 years of 24X7 server use

OWC replaced the modules — lifetime warranty

Replacing the bad memory

By good luck (planning for the worst), I had brought along a spare server machine, slower, but fully capable of replacing the main one—and also with the same kind of memory modules. Once I confirmed the bad memory in the server, I opened it up and swapped-out its memory for the modules in the backup machine.

Once the memory was swapped-out in the server, all the issues went away, with no further problems in spite of aggressively stress-testing it.

UPDATE: OWC stood by their lifetime warranty for the memory. The new memory is installed and working in the MacBook Pro from which memory was borrowed.

The 2012 MacBook Pro — classic that still sells

You can still buy the 2012 MacBook Pro used at MacSales.com. Not a speed demon by any metric, but they last forever. Good web servers, music servers, etc. The best model is that "late 2012" 15-inch, with a 4-core CPU (4 real, 8 virtual cores).

My web server has been a late-2012 MacBook Pro 4-core running 24X7 for some years now. Not the fastest machine around, but incredibly reliable. Prior to that, 2-core models ran for most of a decade without any issues whatsoever. Any downtime until now had been purely software issues (aside from the rare data center glitches), and those were few and far between and generally my goofs.

To its credit, Apple once long ago built hyper-reliable and durable machines (upgradeable too!). The 2012 MacBook Pros have been more reliable over the past ~13 years than any data center yet (Facebook and Google outages anyone?). No subsequent Apple MacBook Pro has been nearly as solid, and we’ve left the path up upgradeability some years ago. Apple now builds flaky, non-upgradeable MacBook Semi-Pro machines—shiny throw-away models built to the dilettante design-of-the-day ideas (no SD card slot? no USB-A ports? No ethernet port?).

diglloydTools™

diglloydTools™