|

|

|

|

|

$220 SAVE $130 = 37.0% Western Digital 16.0TB Western Digital Ultrastar DC HC550 3.5-in… in Storage: Hard Drives

|

|

|

|

|

CPU Cores Explained

Related: bandwidth, CPU cores, laptop, Mac Pro, MacBook, memory, memory bandwidth, Photoshop, RAID

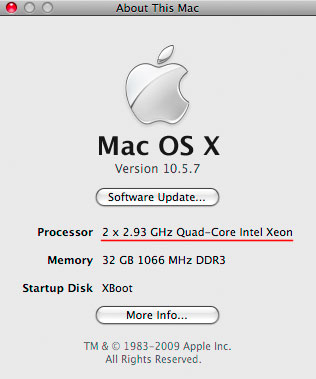

CPU is a loosely-used term, but traditionally referred to a single physical computer chip, the computing brain inside your Mac. The MacBook Pro, MacBook, iMac all have a single CPU chip; certain models of the Mac Pro (2012) have dual CPUs, each with four or eight cores.

On each physical CPU, there can be more than one CPU core (“core”), with each core fully capable of running the entire system and applications all by itself.

As of 2012, Intel chips used in Macs have 2/4/6/8/12 cores. Future Intel chips will have even more cores.

The emerging multi-core reality is the impetus for new technology from Apple, and increased attention from application developers to exploiting all that computing power. Whither Adobe Photoshop CS4, with its gross underutilization of even two cores for many tasks?

There are still many inefficient applications which do not use the available CPU cores, confining their activities to “single thread” mode, using only a single core. This will slowly change, as market pressures come to bear, but four cores is enough for most users, with six cores being plenty except for specialized tasks.

How many cores

Check how many CPU cores your system has by choosing .

In the example at right, the example Mac Pro Nehalem has two 2.93GHz CPUs, each with four cores, for an eight-core system.

However, the Nehalem also has a “virtual core” technology that means there are 16 virtual cores (which in practice offer little or no benefit).

Examples of CPU usage

All 16 virtual cores being utilized at 100% (1600% total)

Photozoom Pro 3

This is what you want.

When using Activity Monitor’s window (), the ideal scenario is to see all CPU cores busy; black areas indicate idle time.

Show at right is the CPU usage for Photozoom Pro 3 running a system stress test. All 16 virtual cores are fully utilized. Green indicates “user” time and red indicates “system” time. It’s all usage; there are no black areas, showing that none of the CPU cores are idle at any time.

(quad core system)

99% of a CPU is being used here, cycling between cores

(Photoshop CS4 saving a large PSD file)

This is bad, a waste of computing potential.

At the opposite extreme, some programs are single-threaded; they make use of only a single core, no matter how many cores are available. Photoshop CS4 is single-threaded for opening and saving files More, a serious performance problem which has gone unaddressed for years.

(quad-core system)

Only 200% (2 cores) are being used out of 400% possible

This is “ok” but a waste of potential.

(Photoshop CS4 Smart Sharpen, quad-core Mac Pro 3GHz)

Photoshop CS4 does make some use of a multi-core system, but seem to limit their usage to about two cores, which means that a quad-core Mac Pro is used at half its potential speed, and an 8-core Mac Pro at 25% of its potential. The reasons for this are unclear, but Photoshop CS4 seems limited to about 2 cores worth of CPU usage over a variety of tasks, and often doesn’t even do that well.

More on cores

Threading

Some applications have few or no CPU-intensive operations, but can benefit from threading—doing more than one task at once, such as copying files, emptying the trash and compressing a folder at the same time.

Threading is valuable even on single-core systems and is particularly helpful when the task depends on disk or network I/O. The failure to use threading is often more of an issue than multi-core support because it prevents the user from doing useful work while the task runs.

An egregious example is Adobe Photoshop CS4, which prevents any other useful work while it opens or saves a (single) file, which can take several minutes for large files. See How to speed up opening and saving files in Photoshop.

Ultra-high performance across entire capacity, outperforms the competition.

Tiny, bus-powered, rugged, compact!

Teamwork

By working in parallel, multiple CPU cores can greatly accelerate multiple tasks, or portions pieces of the same task. This is called task threading or simply threading or threaded execution.

Assuming you’ve configured your Mac for optimal performance How, more and more applications in 2008/2009 are running or will soon run at speeds in direct proportion to how many CPU cores your Mac has. This means that an 8-core system can potentially run about 4 times faster than a dual-core system!

Actual performance gains

Actual multi-core performance gains are subject to memory bandwidth limitations (competition for access to memory by multiple cores), synchronization (“handshake”) overhead, and the programming skill of the application developer—threaded code is not easy to write well or correctly.

Some applications will run at the same speed on single-core, dual-core or eight-core systems because the job they are doing can’t be divided into pieces easily. Only one person can drive a car at a time! There are plenty of computing tasks that have such limitations; parallel execution (threading) has been the subject of computer science research for many decades.

Yet in too many cases, it’s simply bad engineering, sloppy design for today’s computing world, where CPU clock speeds are not increasing, but the number of CPU cores is doubling every 18 months. Large numbers of CPU cores are the future, along with using cores from more than one machine.

Don’t forget the graphics chip

Another trend, which can be thought of as “more cores” is the emergence of graphics cards whose processing power can be re-purposed transparently for general computing. Programs like Apple’s Aperture are already doing this, and expect to see it emerge as a Big Deal in 2009.

Why MacBook Pro, MacBook, iMac are limiting

As of late 2008, only the Mac Pro (and XServe) offer more than two cores. The other machines I term dead-end Macs, because improvements in application code still only have two cores to exploit, whereas Mac Pros with 4 or 8 cores have headroom for future improvement in applications, extending their service life.

Limitations to multi-core performance

Memory bandwidth

Memory bandwidth (speed) is a major “bottleneck” with multi-core systems. Think of a garden hose as compared with a fire hose—the fire hose allows a much greater volume of water to pass through it per unit time: perhaps 200 versus 5 gallons per minute.

With memory, it’s gigabytes per second: that’s bandwidth. With the network or internet, it’s megabits per second (8 bits per byte); in all cases it is bandwidth or the size of the “pipe” that places a hard limit on anything that wants to push (write/upload) or pull (read/download) through it.

All CPU cores must share access to the same physical memory and therefore have to take turns to read from or write it. Like the Ladies bathroom at a movie theatre, a long queue can form waiting for access—the cores actually become idle for much or even most of the time that they are allegedly computing; they are forced to wait most of the time for their memory access operations to complete. This is why one can observe full CPU utilization on an 8-core system How, but see only only -0.1X - 1.5X speedup over a 4-core system—the cores are “actively waiting” for memory access. That’s right: memory contention and other overhead can make a 4-core system run faster than an 8-core system. Scalability keeps improving with newer chips and faster memory however.

With a well-engineered program, memory bandwidth is one key reason why an eight core machine might not run any faster than a quad-core machine, or might even run a little bit slower. However, programs that are primarily computation-intensive can show exactly double the performance with eight cores instead of four.

I/O speed

Like memory bandwidth, disk speed or network speed can throttle performance. Any program capable of using all 8 cores might needs a lot of data (think HD video). Other programs are all about computation, and have no such dependency.

If a disk can read 70MB/sec (a common figure for even a fast laptop hard drive), then a program that needs to read a 400MB file will take a minimum of ~6 seconds to open it, even if it has no computation to do. Using a striped RAID array capable of 400MB/sec would drop that overhead down to one second.

A well-designed program will be able to process data while simultaneously reading from disk, leading to a situation in which the CPU speed has hardly any effect on execution time. This offers huge potential: by making a faster RAID, one can improve performance up to the point where the CPU cores are fully utilized.

The lesson here is to be aware of what’s actually going on with the software you actually run—if it’s limited by disk speed, invest in a faster disk or RAID before considering a faster computer. And sometimes different software is the answer.

Up to 64TB @ 12500 MB/sec!

Mac or PC.

Ideal for Lightroom, Photoshop, 8K video, data analysis, etc.

Software and scalability

Another multi-core problem is that software today is frequently not written to take advantage of multiple cores efficiently; it is technically challenging to write efficient threaded code that is free of threading bugs (threading bugs are those that result from incorrectly-written threaded code). See Application support for multiple CPU cores.

Threading/threaded means multiple simultaneous “threads” of computation, ideally one or more per CPU core. It’s like having a team of workers, as opposed to a single one.

A highly scalable program will run approximately N times as fast on an N-core system as on a single-core system, at least for reasonable values of N (eg up to 16 cores). Creating a scalable program requires a very high degree of expertise; it is relatively easy to write code that runs efficiently on dual-core systems, runs a little faster on quad-core systems, then shows no further improvement beyond four cores. Adobe Photoshop CS3 is an example of a popular program that scales fairly well to dual-core systems, but shows little improvement on quad-core systems.

Some computing problems cannot be solved in a threaded (parallel) fashion; there are too many serial (ordered) dependencies to allow multiple worker threads to work simultaneously. But the fact remains that most programs are just poorly designed for today’s multi-core world, and that includes popular programs such as Adobe Photoshop CS3, which rarely utilizes more than two of four cores on my quad-core Mac Pro (125% - 200% is typical).

Sometimes it’s just a matter of failure to do anything halfway intelligent. For example, Photoshop CS3’s Save command is not only single-threaded (uses only one CPU core), but it’s modal—it forces the user to wait until the operation completes. There is no legitimate reason that a Save could not be in progress while the user works on another image.

Seagate 22TB IronWolf Pro 7200 rpm SATA III 3.5" Internal NAS HDD (CMR)

SAVE $100

diglloydTools™

diglloydTools™