|

|

|

$610 SAVE $110 = 15.0% ZEISS 32mm f/1.8 Touit Lens for FUJIFILM X OUT OF STOCK in Lenses: Mirrorless

|

|

Validating a Hard Drive or SSD or RAID (DiskTester fill-volume)

Related: bandwidth, diglloydTools, DiskTester, gear, hard drive, How-To, optimization, RAID, SoftRAID, SSD, storage, USB

With new hard drives, it is strongly recommended to qualify each drive separately before putting it to use, especially with a RAID setup (since the RAID depends on all drives performing without errors and with similar performance.

New hard drives are generally not media tested and might have issues that show up only when used, e.g., some areas of the drive are bad, and possibly too-large a chunk for more than a short lifespan.

The DiskTester fill-volume command command is ideal for forcing out issues, as noted above, because it writes nearly all the capacity of the drive and it does so through the entire call chain (file system calls to driver to drive and back).A driver-level test bypasses part of this flow of control. Real programs use the full chain of control, so testing should mimic that.

The DiskTester fill-volume command offered by has several benefits:

- Nearly all the drive sectors are written, forcing the drive to actually write to and read from nearly all sectors.

- The read/verify phase (after writing) forces the drive to read all those sectors that were written, and DiskTester also verifies that the data matches what was written. But the act of reading by itself might force an error to be revealed.

- The output can and should be graphed to visualize performance across the drive. It is not only informational but can show erratic spikes or irregularities.

In addition, the following issues can be detected indirectly:

- Bugs in driver software or management software or hardware . These bugs typically result in system hangs (frustrating but valuable nonetheless).

- Bad hardware of some sort: cable with sporadic 'noise' issues, enclosure with heat or noise issues, etc.

Testing 1/2/3/4/5/N drives simultaneously.

Although the basic GUI can be used to test a drive, the DiskTester command line makes it possible to test many drives simultaneously (one per Terminal window). There is no a priori limit, but do not test so many at once so as to saturate the connection bus, e.g., one hard drive per eSATA or USB3 connection.

Testing a RAID

Once individual drives are tested, a RAID can then be assembled. If the drives are already part of a hardware RAID, then simply test the RAID as a whole. But it is always preferred to test drives singly first, in order to rule out an erratic performer.

Case Study: testing 5 samples

MPG ordered five samples of the Hitachi 7K4000 for use as testing drives for various devices and RAID setups. Especially for RAID, it is important to qualify the drives—to rule out a drive or drives that is slow or erratic for some reason.

The five drives were connected via eSATA (each with its own full bandwidth cable) and five instances of DiskTester were started, one for each of the five drives.

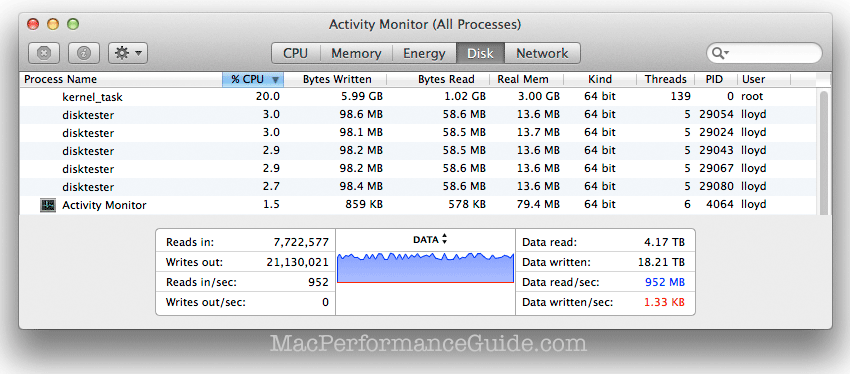

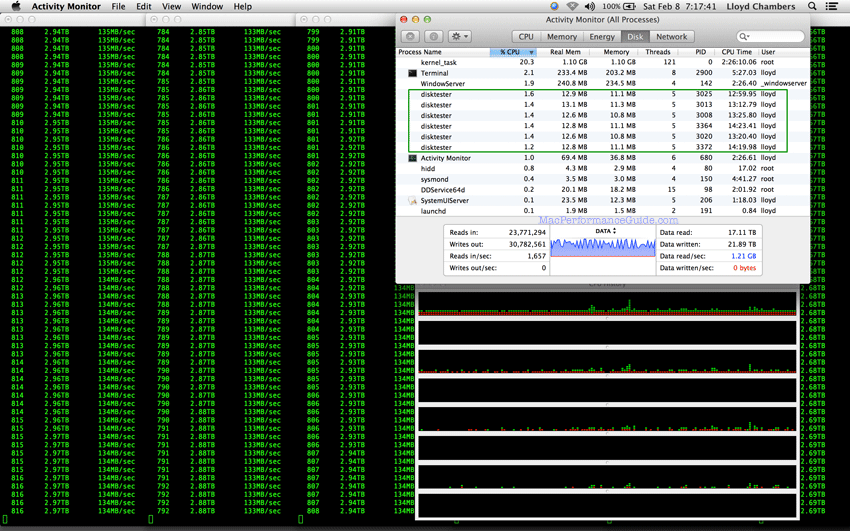

As those five drives are being tested in the read phase, somewhere between 800-960MB/sec is being read. Each 'disktester' also consumes a few percent CPU in a separate thread during the read phase (to verify data).

One of the drives was bad, see discussion that follows.

# fill the volume 't2' to capacity with 16MB writes/reads diglloyd:DIGLLOYD lloyd$ disktester fill-volume --xfer 16M t2 <= commmand DiskTester 2.2 64-bit, diglloydTools 2.2.2, 2013-10-26 13:20 OS X 10.9.2, 24 CPU cores, 81920MB memory Wednesday, January 1, 2014 at 2:44:54 PM Pacific Standard Time Volume: t2 Num files: 1000 Space to fill: 3.62TB File size: 3.70GB Transfer size: 16384KB Fill with: "0x0000000000000000" Free space to remain: 22.3GB = 0.60% Verify: true Creating 1000 files of size 3.70GB on volume "t2" Speed shown includes file system create/open/allocate/write-- real world time.

One bad drive

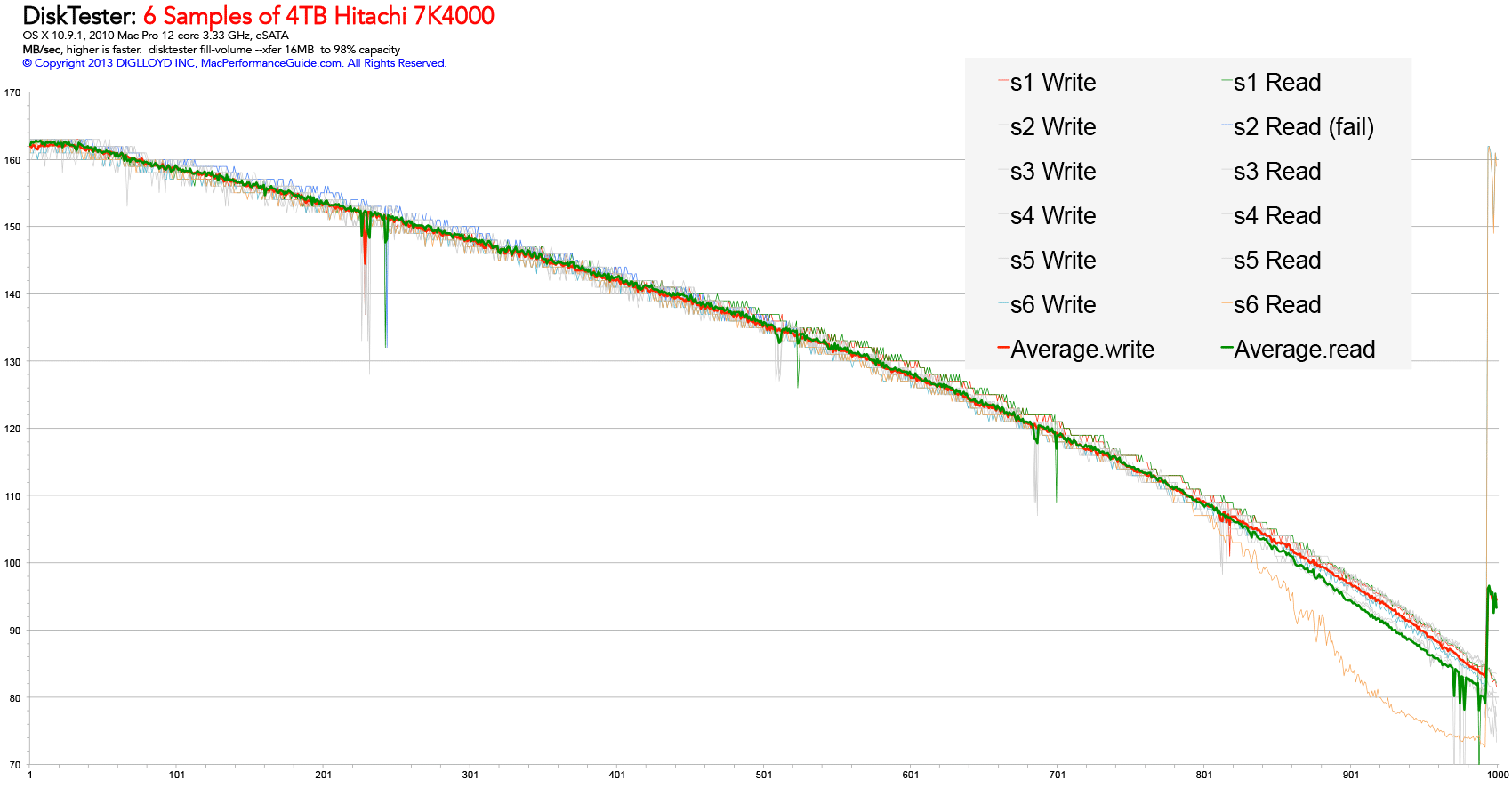

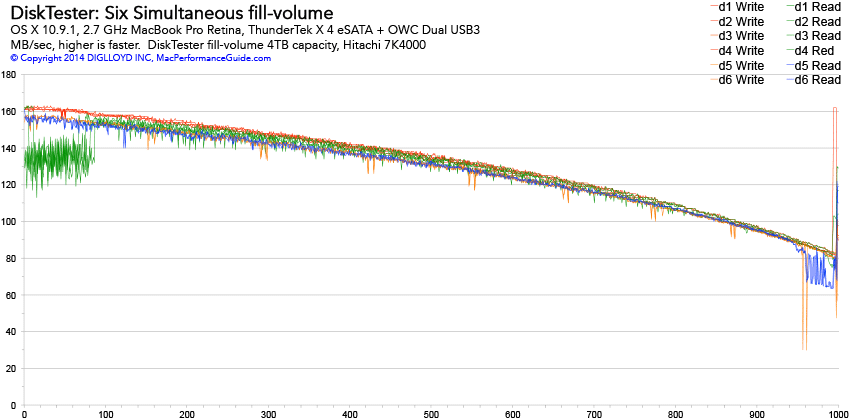

Graphed below are write and read speed across 99% of the 4TB capacity for all five drives. The performance pattern is highly consistent among the five drives; they overlap nicely—perfect for RAID.

One sample ('s2', blue line) failed the read portion of the test with a -36 I/O error. While all data to that point verified, an I/O error is a “hard” error generated by the device; the drive cannot read data successfully a little more than halfway through its capacity.

Later, a 6th sample was tested on USB3 and it showed consistent performance until about 80% of the way out, then it plummeted in performance with a spike at the end, suggesting some bad block remapping might be going on.

FileReaderTask::ReadAll: exiting

Task FileReaderTask exited with error: disk error (I/O error) [-36]

Read 523 files totaling 1.89TB in 13258.3 seconds @ 150MB/sec

No verification failures

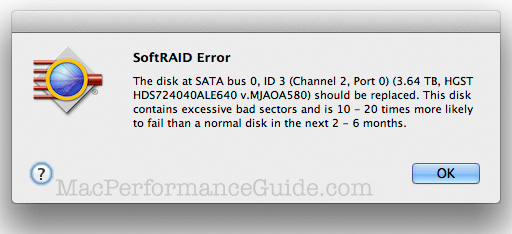

DiskTester forced the error by the write/read phase; SoftRAID confirms the issue after the fact via SMART status:

SoftRAID confirms the problem drivevia SMART status

As many as desired

Have a 5/6/8-bay unit? Verify each drive simultaneously. This works great so long as the unit has the bandwidth to support that much simultaneous disk I/O. Otherwise, it can still be done, but the speed will be constrained by the bus.

Open one Terminal window for each drive and invoke one disktester for each:

disktester fill-volume volume1 # in the 1st Terminal window

disktester fill-volume volume2 # in the 2nd Terminal window

disktester fill-volume volume3 # in the 3rd Terminal window

... etc ...

The "#" stuff above is just a comment—don’t type it in. Use quotes around the volume name if it contains a space (spaces discourages as a hassle for that reason).

A lot of time can be saved this way, since a single 4TB drive takes around 14 hours to write/read via DiskTester fill-volume. For example, by running 4 drives in parallel, the total time is still ~14 hours, not 64 hours.

Click to view larger image.

Results

This test represents writing 24TB of data and reading 24TB of data across six 4TB drives! MacBook Pro Retina 2.7 GHz.

Given that six drives were being tested simultaneously, the read-speed blip at left for the first four drives d1, d2, d3, d4 might be a Thunderbolt bandwidth limit. Similarly, the slightly slower read/write speed (overall, vs Thunderbolt) for the two USB3 drives is not definitive given the testing circumstances.

Given the test conditions of 6 simultaneous drives, the results look just fine here, the green glitch at left presumed to be a bandwidth limitation / system limitation.

diglloydTools™

diglloydTools™