|

|

|

$610 SAVE $110 = 15.0% ZEISS 32mm f/1.8 Touit Lens for FUJIFILM X OUT OF STOCK in Lenses: Mirrorless

|

|

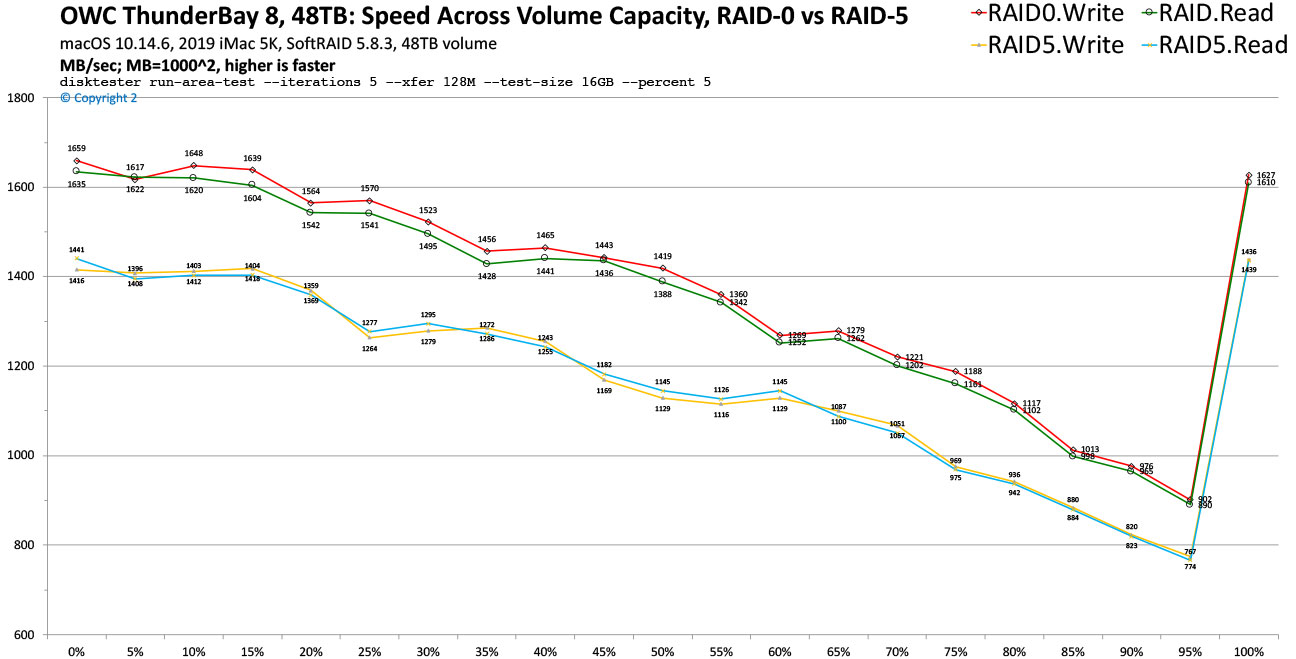

OWC ThunderBay 8: Speed Across Volume Capacity, RAID-0 vs RAID-5

Related: hard drive, Other World Computing, OWC Thunderbay, OWC ThunderBay 8, RAID, RAID-0, RAID-5, SoftRAID, storage, Thunderbolt, Thunderbolt 3, weather events

Configurations up to 128TB (8 X 16TB). Larger capacities might become available later in 2020. Various drive choices available including standard and enterprise drives. Includes SoftRAID Lite or SoftRAID XT, which can also be used in non-RAID configuration.

MPG tested the 48TB OWC ThunderBay 8 with eight Toshiba MD04ACA 6TB hard drives (128MB cache per drive), using the full version of SoftRAID 5.8.3.

This page evaluates RAID-0 vs RAID-5 performance of the OWC ThunderBay 8 across the entire capacity of the volume—48TB for RAID-0 and 42TB for RAID-5.

Tip: buy more capacity than needed in order to sustain higher performance for same data usage

Hard drives slow down as they fill up. That is, the outer tracks deliver much more data per rotation than the inner tracks, due to constant data density but a much greater circumference in the outer traces—more data per revolution of the platters. Thus performance drops substantially as the slower and slower parts of the hard drives are used. This is true of ALL hard drives, but not SSDs.

Creating a volume using less than the full capacity bounds that volume’s storage area to the faster tracks (first volume created gets the outer and fastest tracks, next volume gets the next-fastest tracks, etc).

When a sustained minimum performance is a consideration (e.g., for real-time video applications or simply a desire for some minimum performance), MPG recommends buying more capacity than needed and using less than the maximum capacity.

For example, as shown here with 8 X 6TB = 48TB capacity (42TB with RAID-5), to guarantee a minimum of 1000 MB/sec the cutoff for RAID-5 is about 70% of maximum capacity (29TB) and for RAID-0 it is about 85% of maximum capacity (41TB). The remaining space can be ignored (left unused) or a 2nd volume can be created (e.g., Spare or Scratch or whatever).

Test results, RAID-0 vs RAID-5 across volume capacity

Test mule was the 2019 iMac 5K. The run-area-test command of diglloydTools Disktester was used. Here it was invoked to iteratively test performance 5% further into the capacity over a 16GiB area for five iterations using 128MiB transfer sizes. It was used to greatly reduce the testing time; it was not time or electricity feasible to use fill-volume while traveling in my Sprinter van.

disktester run-area-test --iterations 5 --xfer 128M --test-size 16GB --delta-percent 5

While run-area-test is an approximation of the fill-volume test, the 5% spacing across the capacity yields essentially the same insights, except that the last section typically jumps up in performance due to foibles of file system block allocation. Also, fill-volume writes and reads all available blocks, which is ideal when putting drives into service—at the time hit of taking days with large volumes, versus an hour or less with run-area-test.

On the fastest part of the volume, RAID-0 delivers a blazing fast 1660 MB/sec, with RAID-5 not far off at 1440 MB/sec.

As the test progresses deeper into the volume capacity, the data per hard drive platter revolution decreases, and thus the speed steadily drops off. Speed is roughly cut in half by the time the volume reaches capacity. This is why buying more capacity than “needed” is wise—to keep performance higher.

Vertical scale is MB/sec. Horizontal scale shows percentage of volume capacity, e.g., 50% of an 48TB RAID-0 stripe is 24TB into the capacity. The RAID-5 volume had a 42TB capacity.The foibles of file system block allocation cannot be controlled via API, so the last area tested actually gets allocated near the beginning. This behavior is consistent, but can vary depending on the way in which data is written sparsely to the volume.

diglloydTools™

diglloydTools™