|

|

|

$610 SAVE $110 = 15.0% ZEISS 32mm f/1.8 Touit Lens for FUJIFILM X OUT OF STOCK in Lenses: Mirrorless

|

|

2013 Mac Pro: Algorithms and Performance

Related: 2013 Mac Pro, CPU cores, GPU, Mac Pro, Macs, Photoshop, video tech

Get Mac Pro at B&H Photo. See also MPG’s computer gear wishlist as well as diglloyd-recommended performance packages for Mac Pro.

This page discusses software support and algorithmic approaches as general background. It can safely be skipped by most readers.

For performance, the question of CPU choice and GPU choice rests upon many factors. There is no easy answer on what is fastest; many times “faster” actually means “no difference”.

CPUs underutilized: serialized code

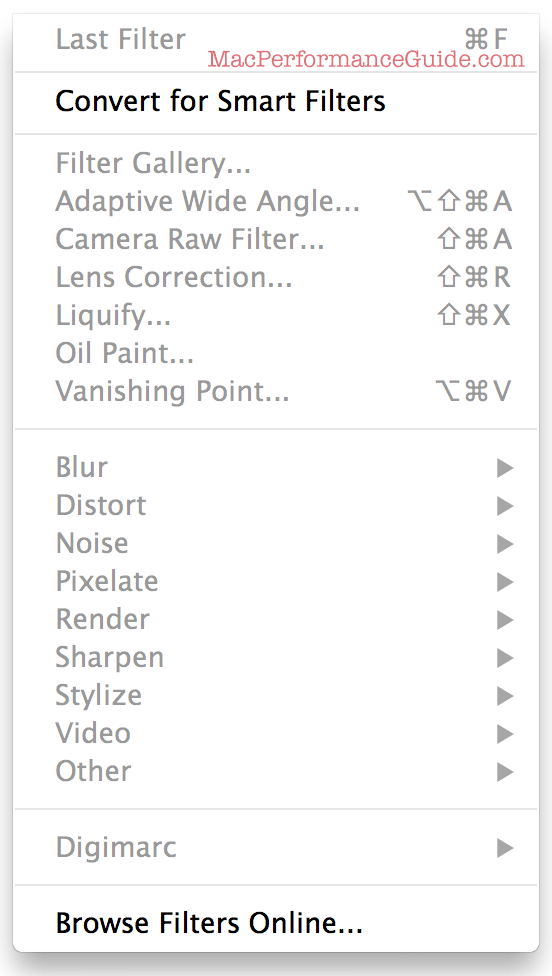

8 layer selected, all filters disabled,

no parallel processing!

There are many non-video programs that uses GPUs: Photoshop, Lightroom, some raw converters, various filters and so on.

Yet many application already have ample CPU power at their disposal that is grossly underutilized, so how can fast GPUs make software run faster that already runs slowly because it is structured in a way that defeats concurrent operation?

Let’s consider Photoshop: it leaves most CPU cores idle most of the time on a 12 core Mac Pro*. Moreover, there are baked-in algorithmic defects like serialized file-by-file batch processing and the lack of any option to sharpen layers in parallel.

Will fast GPUs change most of this? Absolutely not. It requires structural changes to fix most of this stuff. Simply doing that for CPU utilization with no GPU support at all would be a huge gain for many situations that your author endures daily.

* Your author has yet to find any workflow task for which Photoshop uses more than 8 cores (out of 12) for more than a sliver of time. Most of the time an average of 2-3 CPU cores are used!

History

Let’s look at history for a reality check. For the past decade high end users have enjoyed systems with 4/6/8/12 cores, 2009 having offered up to 8 fast CPU cores. Even laptops have four cores here in 2013. But what is the reality nearly five years later?

- How many programs are written to utilize all CPU cores effectively? (Very few).

- Does Photoshop make it possible to perform parallel operations for common tasks (e.g. sharpen or blur 10 layers at once)? (No).

- How many programs with multi-core support use only a fraction of the cores most of the time? (Most).

- How many programs remain single-threaded and able to utilize only one CPU core and/or insist on serialized file-by-file operation at least for some operations? (Many if not most).

- How many programs lock the user out in modal ways while an operation is being performed, forcing the user to wait, thus making it impossible to initiate other operations? (Nearly all).

- How many programs have a computing paradigm that is inherently amenable to multi-core support? (Many applications have tasks that are inherently serial tasks).

Algorithmic code quality will not leap forward with faster GPUs any more than it has with CPUs, but very powerful GPUs do offer one possible advantage: specific sub-tasks that could be run on a GPU 5X or 10X faster (even within a serialized context) is still a big win for that task.

diglloydTools™

diglloydTools™